Nowhere else has tech evolved so fast as in deep learning, it powers today’s smartest tools. Phones unlock with facial recognition, gadgets respond to voice commands like Alexa, and cars steer themselves; behind each, a network learns deeply. When information piles up faster than ever, regular code falls short; this is where layered models step in. Machines now tackle messy challenges once too hard for old-school algorithms or basic pattern finders.

What is Deep Learning?

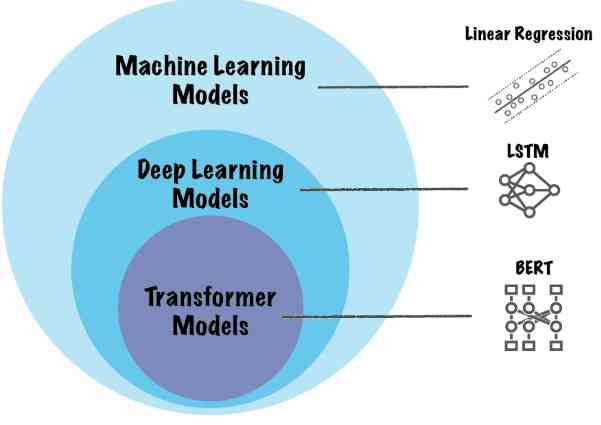

What makes deep learning tick? It sits inside machine learning , just like machine learning lives within artificial intelligence . Instead of simple models, it relies on neural nets with several layers. These layered systems break down data step by step. They build up understanding gradually, layer after layer. Decisions come from patterns found during this climb through connections. Smarts emerge not from rules, but from structure shaped by exposure.

Most older machine learning methods need people to pick out key traits by hand. Instead, deep learning pulls meaningful patterns directly from unprocessed inputs such as sound, images, words, or moving images.

Key characteristics of deep learning:

- Uses multi-layered neural networks

- Learns hierarchical data representations

- Improves performance with more data

- Requires high computational power

When dealing with messy, complicated information, like spotting items in photos or making sense of how people speak, deep learning often works well because it handles chaos without needing clear rules. Though tough to manage, these patterns slowly reveal meaning when processed through many layers that adapt on their own.

How Deep Learning Works?

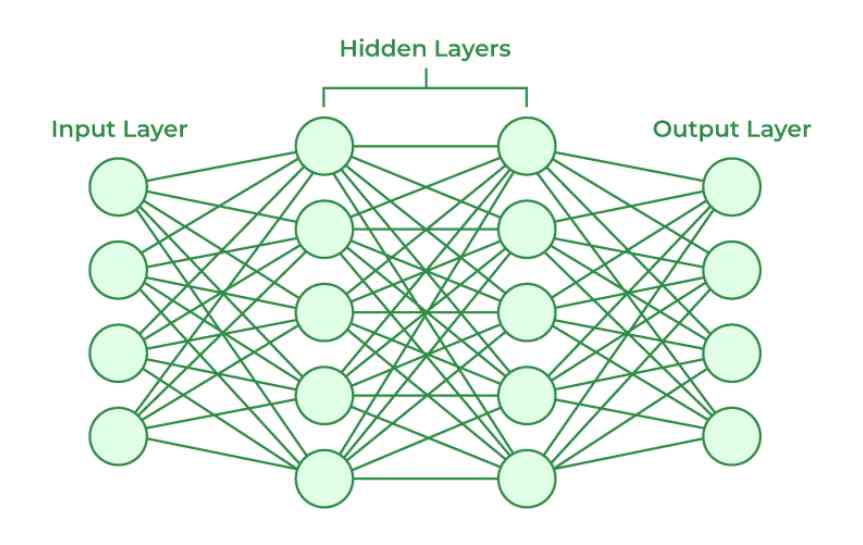

At the heart of deep learning are neural networks inspired by the structure of the human brain. These networks consist of interconnected units called neurons that process and transmit information.

1. Neural Networks and Layers (Input, Hidden, Output)

Hidden units sit between the input and output sections. Moving forward, information passes through these intermediate parts. At times, the starting data enters via specific entry points. Often, signals travel toward final destinations after processing. In sequence, each section transforms incoming values before sending them further

- Input Layer : Here, we receive raw data, i.e., pixels of images.

- Hidden Layer : This is expected to work more deeply, like feature extraction, complex transformations.

- Output Layer : This produces the final prediction or classification.

A single neuron crunches numbers on incoming data, then runs that output through something like ReLU or sigmoid. Sometimes it uses softmax instead of simpler functions after processing values step by step. One piece at a time gets transformed before moving ahead.

2. Training Process and Backpropagation

Finding patterns takes time, yet deep learning systems get better by practicing over and over. Each round adjusts tiny pieces inside, so the model slowly improves while working through examples. Mistakes help shape progress because corrections guide how connections change strength. This cycle repeats until responses start matching expected outcomes more closely

- Forward propagation of data through the network

- Calculation of error (loss function)

- A signal moves in reverse when mistakes get sent back through the network

- Adjustment of weights using optimization algorithms like gradient descent

Over time, the system keeps adjusting until performance reaches a suitable level.

3. Role of Large Datasets and GPUs

Deep learning models require:

- Large datasets to avoid overfitting

- Faster processors power heavy tasks, particularly graphics chips plus tensor units

Training big neural networks becomes practical because GPUs speed up matrix math. That capability changes what models can handle

Types of Deep Learning Models

1. Artificial Neural Networks(ANN)

One type of network people often use is called ANNs. These show up a lot when handling basic patterns. Think linear paths where data moves step by step. They work well for straightforward tasks without layers piling up. Often seen in early machine learning models, too

- Regression problems

- Binary and multi-class classification

- Structured/tabular data

These layers link every neuron to each one in the next, building a base that others rely on instead.

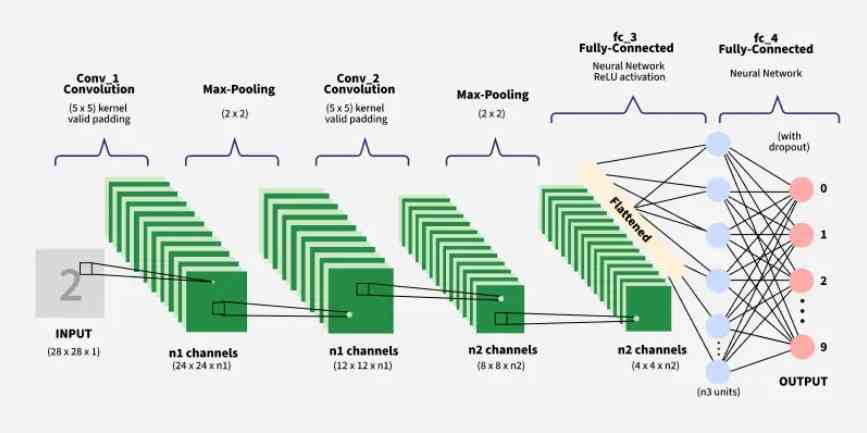

2. Convolutional Neural Networks(CNN)

A kind of network built just for photos and videos? That is what CNNs do. Layers inside them spot things like lines, rough patches, or forms. These pieces fit together through a method called convolution.

Key applications:

- Image classification

- Facial recognition

- Medical image analysis

- Object detection

Fewer numbers need tracking inside these nets when set beside older network styles.

3. Recurrent Neural Networks(RNN)

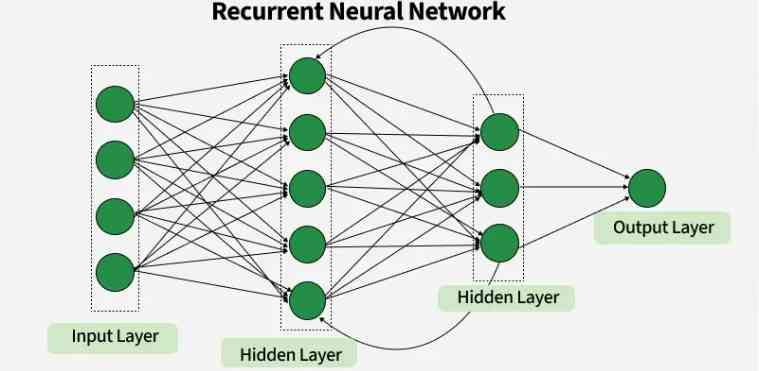

Recurrent Neural Networks (RNNs) differ from regular neural networks in how they process information. While standard neural networks pass information in one direction, i.e., from input to output, RNNs feed information back into the network at each step.

Key Components of RNNs

- Recurrent Neurons

- RNN Unfolding

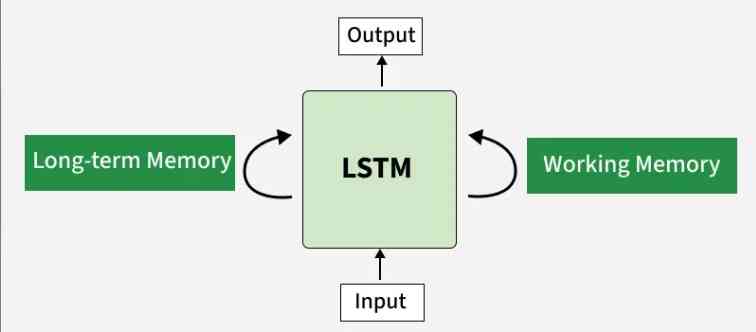

4. Long Short- Term Memory(LSTM)

LSTM works like a unique version of RNN built to handle the vanishing gradient issue. Because regular models struggle here, this one adjusts flow through internal steps. While others lose signal strength over time, it keeps key details alive longer. Since learning long patterns matters, its structure uses gates that control what sticks around. Though standard networks fade out, this approach holds memory with smarter updates

Advantages:

- Captures long-range dependencies

- Handles sequential patterns effectively

- Frequent in natural language tasks, also found in voice analysis tools

5. Transformers

Out of nowhere came transformers, reshaping how machines grasp human speech. Their arrival shifted everything in deep learning's path forward.

Key features:

- Attention mechanisms

- Parallel processing

- High scalability

Built on transformers, BERT shows up in many search tools. GPT appears where text gets generated fast. T5 turns up in translation and summarizing tasks. These models underpin much of today's smart writing tech.

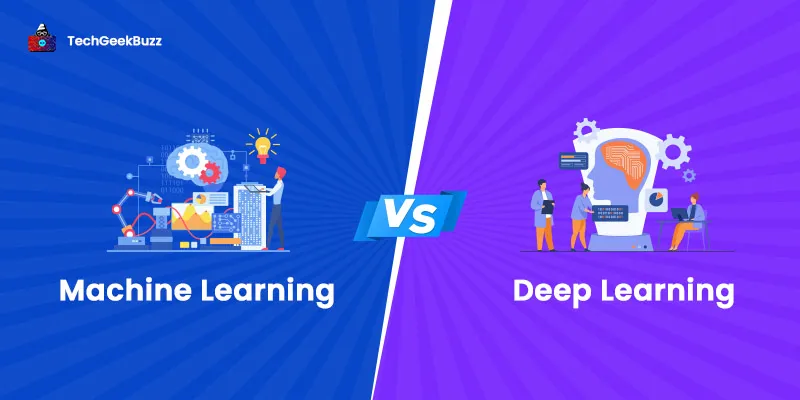

Deep Learning vs Machine Learning

|

Sno |

Deep Learning |

Machine Learning |

|

1. |

The feature learning in deep learning is automatic. |

The feature learning in machine learning is manual. |

|

2. |

Data in deep learning is very large |

Data in machine learning is about small to medium. |

|

3. |

The computational power in deep learning is really high. |

The computational power in machine learning is low to moderate. |

|

4. |

Accuracy on complex data is very high |

Accuracy on complex data is moderate. |

|

5. |

Interpretability in this learning is lower than the other on |

Interpretability here is higher than that of deep learning |

Use Cases for Each

1. Machine Learning

Machine learning is widely used in systems that need to analyze data, recognize patterns, and make predictions. It helps organizations automate decision-making and improve efficiency across many industries.

Common use cases include:

- Credit scoring : Financial institutions analyze customer data to determine creditworthiness and assess loan risks.

- Spam detection: Email systems use machine learning algorithms to identify and filter unwanted messages automatically.

- Recommendation filters : Online platforms analyze user behavior to recommend products, movies, music, or other content.

Machine learning models improve over time as they process more data, making predictions more accurate.

2. Deep Learning

Deep learning is a specialized area of machine learning that uses artificial neural networks to process large amounts of complex data. It is particularly effective for tasks involving images, speech, and large datasets.

Examples include:

- Image recognition : Systems can identify objects, people, or patterns in images and videos.

- Speech translation : Deep learning models convert spoken language into text and translate it between languages.

- Autonomous driving : Self-driving vehicles analyze road conditions, obstacles, and traffic signals in real time.

Deep learning enables machines to perform tasks that traditionally required human intelligence.

Applications of Deep Learning

1. Image and Facial Recognition

Deep learning is widely used in image and facial recognition technologies. Smartphones use facial recognition to unlock devices securely, while surveillance systems and social media platforms use similar technology for identity verification and photo tagging. These systems analyze facial features and patterns to recognize individuals accurately.

2. Speech Recognition and NLP

Deep learning powers many speech recognition and natural language processing systems. Technologies such as voice assistants, speech-to-text applications, and language translation tools rely on neural networks to understand and process human speech and text. Examples include digital assistants and automated transcription systems.

3. Recommendation Systems

Many online platforms rely on deep learning-based recommendation systems to personalize user experiences. Services such as Netflix, Amazon, and Spotify analyze user preferences, viewing history, and behavior to recommend movies, products, and music tailored to each individual. These systems help improve user engagement and satisfaction.

4. Autonomous Vehicles

Self-driving vehicles rely heavily on deep learning technologies to navigate roads safely. Neural networks process visual and sensor data to detect objects, recognize traffic signs, identify lane markings, and make driving decisions in real time. This technology plays a key role in the development of intelligent transportation systems.

5. Healthcare and Medical Imaging

Deep learning is transforming healthcare by assisting doctors in diagnosing diseases and analyzing medical data. It is used in medical imaging to detect tumors, identify abnormalities in scans, and support drug discovery research. These technologies help improve diagnostic accuracy and medical decision-making.

Future of Deep Learning

1. Emerging Trends

Deep learning continues to evolve rapidly with new advancements and applications. Emerging technologies allow systems to generate text, images, audio, and video automatically.

These innovations help:

- Automate content creation

- Improve digital tools and productivity software

- Support creative and analytical tasks

2. Integration of Generative AI

Generative technologies built on deep learning can create realistic content such as images, music, and written text. These systems are used in creative industries, design tools, and productivity platforms. They help automate complex tasks while enhancing creativity and efficiency.

3. Ethical Considerations

As deep learning systems become more powerful, ethical concerns are increasingly important. Some challenges include:

- Bias in training data that may affect decision-making fairness

- Lack of transparency in complex neural network models

- Privacy concerns related to personal data usage

- The environmental impact caused by the large computational resources needed to train deep learning models

Conclusion

From huge amounts of data, machines now spot intricate patterns, thanks to deep learning. Not stuck with old techniques, they surpass them in seeing, speaking, and choosing paths. Modern tech leans on this shift, quietly shaping how systems behave. What once took rigid programming now emerges through layered learning, shifting what tools can do.

Deep learning needs lots of data, as well as serious computing power. Still, what it offers tends to beat the drawbacks. With better machines plus ongoing discoveries, progress keeps rolling forward. This tech is likely to influence how artificial intelligence evolves. Automation systems grow smarter because of it. Even the way people interact with computers shifts over time.Anyone into AI, data science, or what's coming next needs to get how deep learning works. It’s not something you can skip anymore.

People are also reading: