Docker is a free, open-source container-management framework that makes application build, development, and deployment easier. It's a collection of platform-as-a-service product lines that allow you to build, deploy, and test applications in isolated and virtualized environments. Docker is a power-packed application development and deployment platform. It is often used to integrate new technologies and simplifies the deployment of applications across multiple systems. On every system, a Docker-based application will start up the same way every time. Hence, if the app runs on your local machine, it will run on any machine that supports Docker. That's indeed fantastic! It streamlines your entire application development process and can be a useful tool for delivering software in a continuous manner. It lets you create applications, share with your team, test the application, and deploy them in the production environment all through a single platform. It creates a packaged environment that contains libraries, binaries, packages, dependencies, and other system configuration files that you would require to develop applications. The main advantage is that it makes your application platform-independent and hence, makes them portable. Although the entire docker architecture and platform is relatively easier to master, new users may be confused by some Docker-specific terms. Some common terminologies used in Docker are Images, Dockerfiles, Docker-engine, volumes, containers, etc. that you will need to learn and should eventually become handy with. It's probably a good idea to try to grasp the fundamental elements of Docker. It will expedite the process of learning how to work with them. In particular, you should be aware of two key aspects of Docker's operation as you begin to learn about it. These are Docker Containers and Images. The difference between a Docker image and a container can be confusing at first. We'll grind them down in this post so that you can understand them in a better way.

What are Docker Images?

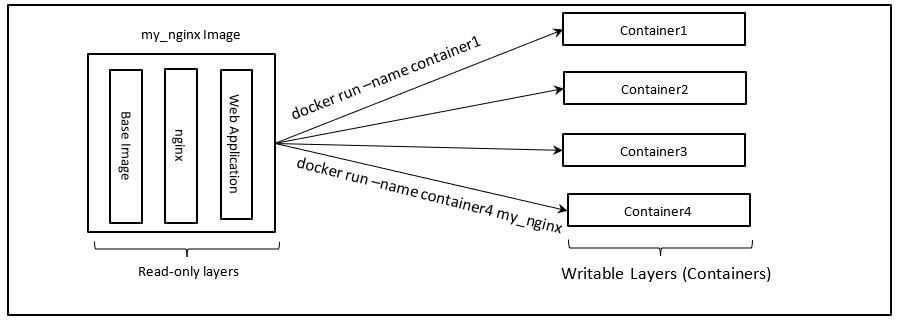

In the above diagram, on the left, you can see a customized Nginx Web Server Docker Image and on the right, you can see a few writable containers created using that image. Now, let’s try to see what actually Docker Images are. Docker Images can be considered as a blueprint of the application environment that you want to create. These are initially read-only but you can create several writable-layers on top of that to create your own customized images on top of the base images. The docker registry such as Dockerhub contains tons of base images that are very useful such as ubuntu, centos, Nginx, java, python, etc. You can use these images and pull them to your local machine, create customized images on top of them or use them as it is to create working environments called Docker Containers. Docker Containers are merely considered to be running instances of the blueprint of the environment called Docker Images. You can create several Docker Containers from a single Image and none of them will interfere with each other’s processes. You can even create a network of these containers either on the same machine or on different machines and expose ports to communicate and share information. But we will talk about containers later on. Let’s understand Docker Images first. A Docker image can be considered as an immutable (something which you cannot change) file that stores all of an application's source code, binaries, packages, libraries, configuration files, dependencies, etc. You can also call them snapshots because of their read-only feature. They represent the environment's blueprint, typically of an application that you want to run. One of Docker's best features is its consistency. It allows programmers to evaluate and experiment with software in a controlled, reliable, stable, isolated, and packaged environment. You can't start or run images because they're essentially just templates. You can build a container using that template as a base. In the end, a container is nothing more than a running instance of the file containing the instructions to create a container environment (Docker Images). When you start a container associated with a Docker Image, it adds a writable layer to the immutable image, allowing you to edit it. The image that you use to make a container exists on its own and cannot be changed. When we execute a container, you're effectively creating a read-write copy of the entire file system architecture and other dependencies that the associated Image represents. This creates a container layer that allows you to edit the entire image copy. From a single image base, you can make an infinite number of Docker images. You can create a new template using a base image with tonnes of additional layers on top of that base image. Also, every time we alter an image’s state and save it, a new layer is added. As a result, Docker images can be composed of a series of layers, each of which differs but is related to the one before it. When you use it to start up a virtual environment, image layers reflect read-only objects which attach container layers to them. You have the option of sharing images with others as well. You can send a Docker image to someone else once you've built one. That person can use your image to build a new virtual machine, and that will run in the same way as yours. This is why Docker is such a strong platform for developing and deploying software. Every programmer on a team will be operating in the same development environment. Each testing instance is identical to the production version. Your output instance is identical to your test instance. You won't have to waste time troubleshooting problems that only occur in one environment because the systems are similar. Docker Hub was created by developers all over the world who took advantage of the opportunity to exchange images with one another. Docker Hub is a repository of images for a variety of Docker virtual machines. There are images available for almost every common software or application on the planet. Embedding a few lines of code into a Docker file and waiting for a quick download is all it takes to set up a new Docker application. Anyone can publish an image on Docker Hub, just as they can on other repositories. This ensures that your team can quickly build a new Docker image and distribute it to team members all over the world. Your testing and development servers can also get their images from Docker Hub. And, as previously mentioned, the image will behave identically regardless of where it is downloaded.

What are Docker Containers?

Docker Containers are running instances of Docker Images. When you have used a Dockerfile (blueprint of Docker environment where you mention instructions) to build a customized Docker image on top of a base image, you can use the docker build command to build the image, Once the image is built, you can use docker start or run commands to create containers associated to those images. Users can separate applications from the underlying host machines using Docker containers, which are considered to be virtualized run-time environments running on top of host OS. These containers are small, portable units that allow you to quickly and easily start an application. The environment running within the container is standardized and is a useful function. It not only ensures that your application runs, in the same way, every time, but it also makes sharing with other teammates a breeze. Containers have strong isolation because they are self-contained, meaning that they do not disrupt other containers or the server that supports them. These units, according to Docker, "provide the best isolation capabilities in the industry." As a result, you won't have to be worried about keeping your computer safe when creating an app. Containers sit at the application layer, unlike virtual machines (VMs), which sit at the hardware level. They can run isolated processes on a single machine with a shared kernel and virtualized OS. As a result, containers are incredibly light, allowing you to save precious resources. Once you have your container running, you can make changes in the container environment, install packages and libraries, and commit these changes to create a new writable image layer. Let’s understand this with the help of a simple example. Suppose you want to run an Nginx Web server and host simple HTML files on that server inside an Ubuntu machine. What you can do is, use the docker pull command to pull an Ubuntu base image from the docker hub registry or any other Docker registry, use the docker run command to create a container associated with the ubuntu image. You will now have access to the bash of the ubuntu environment. You can install the Nginx server through the command line, expose ports to access the hosted HTML files on your local machines, etc. Or you can use dockerfile to specify instructions such as pulling Nginx and Ubuntu base images, running specific commands to install libraries, specifying entry points, etc. Docker containers, unlike images, are not meant to be shared. They're a lot bigger, containing all kinds of installed apps and configuration data. They also don't save their state, as previously mentioned. If you wanted to move a Docker container from one device to another, it would return to the original configured image when you began it on the second computer. Instead, create an image by committing the container and share it if you want to share your progress on a container. Your work inside a container, on the other hand, does not alter the container. Files or data that you need to save beyond the end of a container's existence should be stored in a shared volume or mount point so that you can access them even after you have ended the container's life cycle. As a developer, there is a range of other advantages to using containers. For example, each Docker container on your computer is isolated from the others. Do you need to set up your database server in a very specific way? You now don't need to think about errors or information leaking into your web server since the two can never share data, storage, or a file system even if both the containers are running on the same host machine.

Docker Images vs Containers

It isn't fair to compare images and containers as competing entities when addressing their differences. Both components are interconnected and form part of the Docker platform's framework. If you read the previous paragraphs, which describe images and docker containers, you should already have a good idea of how the two interact. Images can exist without the existence of docker containers, but containers must run images in order to exist. As a result, containers are image-dependent and rely on them to build a run-time environment. Both concepts are necessary components in the Docker container running process. The final “phase” of that process is having a running container. Docker images basically rule and form containers for this purpose. They work together to maximize Docker's ability. Each image creates a virtual environment that can be shared with anyone around the world. Containers use such images to run applications, which can be simple or complex. Furthermore, some fantastic software, such as Docker Compose, makes it easy to "compose" and allow the working of several containers with just a tiny config file. To configure a web application, for example, images can be used for a database server, caching server, message queue, and application server. Once all of those parts are in place, using an application monitoring framework like Retrace to keep track of them is easy. Docker makes it simple to set up new, similar systems, which reduces development time and speeds up testing.

Docker Images vs Containers: Head to Head Comparison

| Docker Containers | Images |

| These are a writable layer created using Docker Images. | They are composed of read-only layers and we can create containers to write on them. |

| They are created using and associated with Docker Images. | They can be either pulled from registries or created by Dockerfile. |

| They have only one writable layer. | They can have more than one read-only layer. |

| As per our need, we can attach tools such as volumes for persistent storage, networks for inter-container communication, etc. to the container at runtime. | We cannot attach these things to immutable images. |

| We need to commit the containers to create new images to share them with other team members. | We can simply push them to the registry or create tarball files and share them. |

| We can access the command line of containers and execute commands inside the container environment. | Docker Images are simply snapshots and can’t be worked with. |

| They are very large in size. | They are smaller than containers. |

Wrapping Up!

The two most significant Docker artifacts are Docker Containers and Docker Images. Containers offer consistency for any application, ensuring that it runs consistently in any setting. Images adopt a multi-layer file system that is lightweight since each layer only holds the differences from the other layers, reducing the size of the application from GBs to MBs. If you've figured out how to create a container, you'll be able to tell the difference between photos and containers with ease. You should now have a solid knowledge of Docker images and containers, and how they are related after reading this comprehensive guide.

People are also reading: